1–12 (2020)ĭavis, T.A., Hu, Y.: The university of Florida sparse matrix collection. In: International Conference for High Performance Computing, Networking, Storage and Analysis (SC), pp. Huang, G., Dai, G., Wang, Y., Yang, H.: GE-SpMM: general purpose sparse matrix-matrix multiplication on GPUs for graph neural networks. In: Euro-Par 2018: Parallel Processing - 24th International Conference on Parallel and Distributed Computing, Turin, Italy, 27–31 August 2018, Proceedings, pp. Yang, C., Buluç, A., Owens, J.D.: Design principles for sparse matrix multiplication on the GPU. The API reference guide for cuSPARSE, the CUDA sparse matrix library (v11.4 ed.). In: Proceedings of the 24th Symposium on Principles and Practice of Parallel Programming - PPoPP 2019, pp. Winter, M., Mlakar, D., et al.: Adaptive sparse matrix-matrix multiplication on the GPU. In: Proceedings of the International Conference for High Performance Computing, Networking, Storage and Analysis, Salt Lake City, UT, USA (2016)

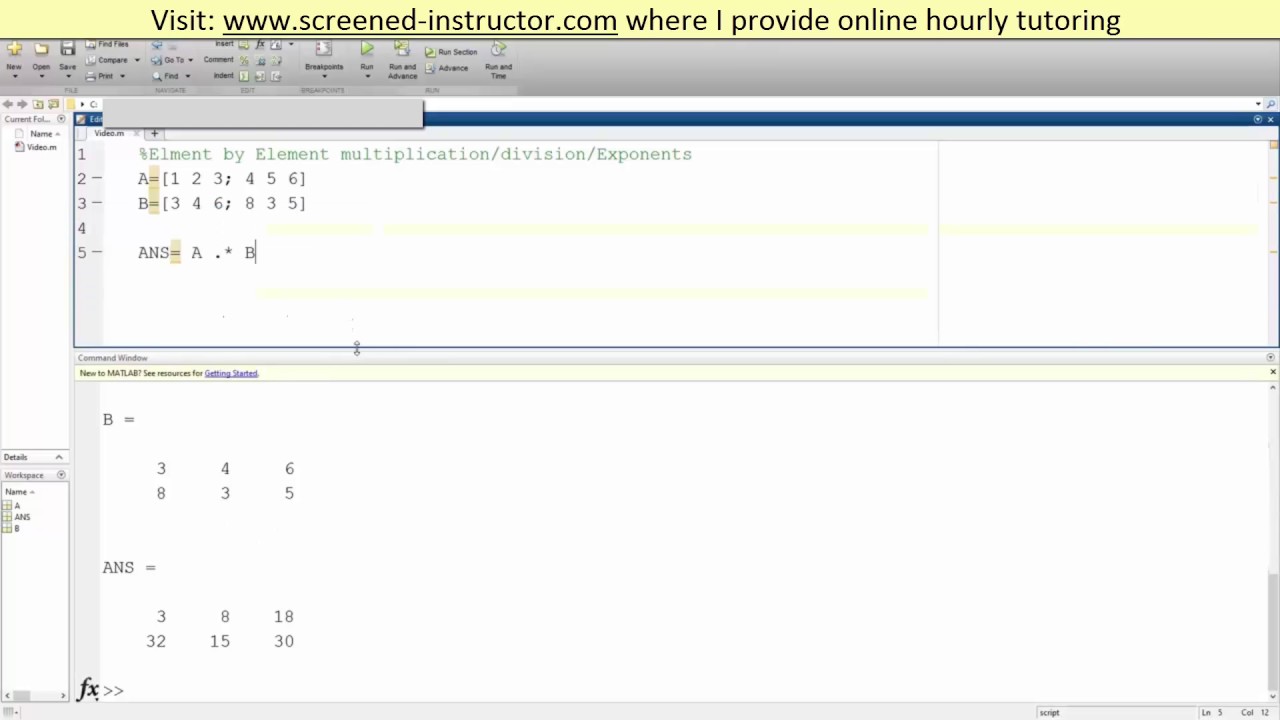

Merrill, D., Garland, M.: Merge-based parallel sparse matrix-vector multiplication. In: Proceedings of the International Conference for High Performance Computing, Networking, Storage and Analysis, SC (2020) Hu, Y., Ye, Z., et al.: FeatGraph: a flexible and efficient backend for graph neural network systems. In: ICLR Workshop on Representation Learning on Graphs and Manifolds (2019) Wang, M., Zheng, D., Ye, Z., et al.: Deep graph library: a graph-centric, highly performant package for graph neural networks. The results show that our kernel is faster than cuSPARSE and GE-SpMM, with an average speedup of 1.61 and 1.42 respectively. Our experimental data comes from the SNAP Matrix Collection. This method reduces the thread divergence in a warp and improves load balancing among warps. In contrast to previous methods which assign a “row” task unit to a warp for processing, we combine short rows in a sparse matrix into “row groups” as a task unit, which allocate more appropriate non-zero elements tasks to the GPU resources.

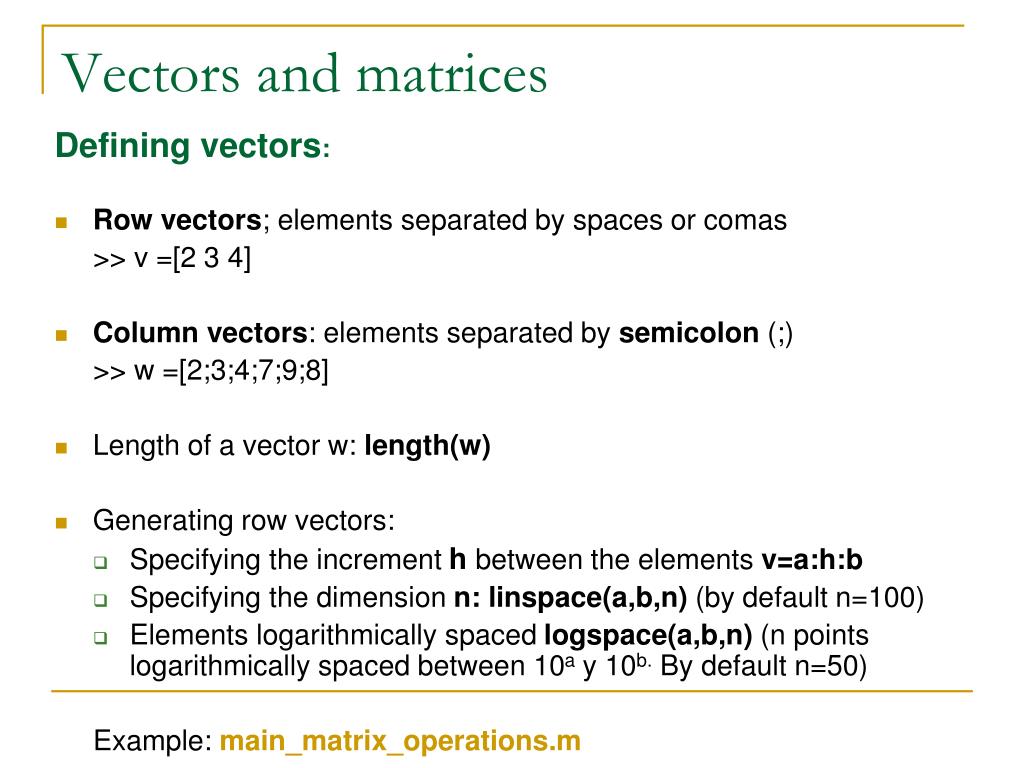

In this paper, We rearrange the distribution of non-zero elements in the sparse matrix and design the SpMM kernel based on the row group splitting strategy. However, due to irregularities of sparse matrices such as short rows with few non-zero elements, current methods suffer from the underutilization of thread resources in GPU. Existing methods mostly adopt a row splitting strategy to obtain better parallelism and memory access efficiency.

For this reason, using sparse matrices can significantly reduce the amount of. While full (or dense) matrices store every single element in memory regardless of value, sparse matrices store only the nonzero elements and their row indices. Researchers have designed many kernels on the GPU to accelerate the SpMM operation. Sparse matrices provide efficient storage of double or logical data that has a large percentage of zeros. The Sparse Matrix-Matrix Multiplication (SpMM) operation is widely used in different fields, especially the recently popular GNN framework.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed